Designing Effective Rubrics

Overview

A rubric is a well-organized grading guide. If you’ve ever spent a weekend writing the same feedback on 40 papers or struggled to explain why one B+ differs from another, effective rubric design can help.

A well-crafted rubric gives students clear expectations before they begin working, ensures consistent evaluation across multiple graders or sections, and saves you from having to write the same feedback over and over again.

Even better, recent research shows that students who receive rubrics in advance perform better and report less anxiety about assignments (Taylor et al., 2024).

This guide focuses on practical rubric design that values thinking processes over easily replicated products. You’ll find strategies for creating assessment tools that support both student learning and sustainable grading practices.

In this guide:

What is a Rubric?

A rubric is a grading guide that links each learning outcome for an assignment to its own evaluation criterion and then describes what different levels of performance look like across each criterion. Having this clarity up front enables students to work directly to your expectations, and makes evaluating the work they produce much faster after they turn it in.

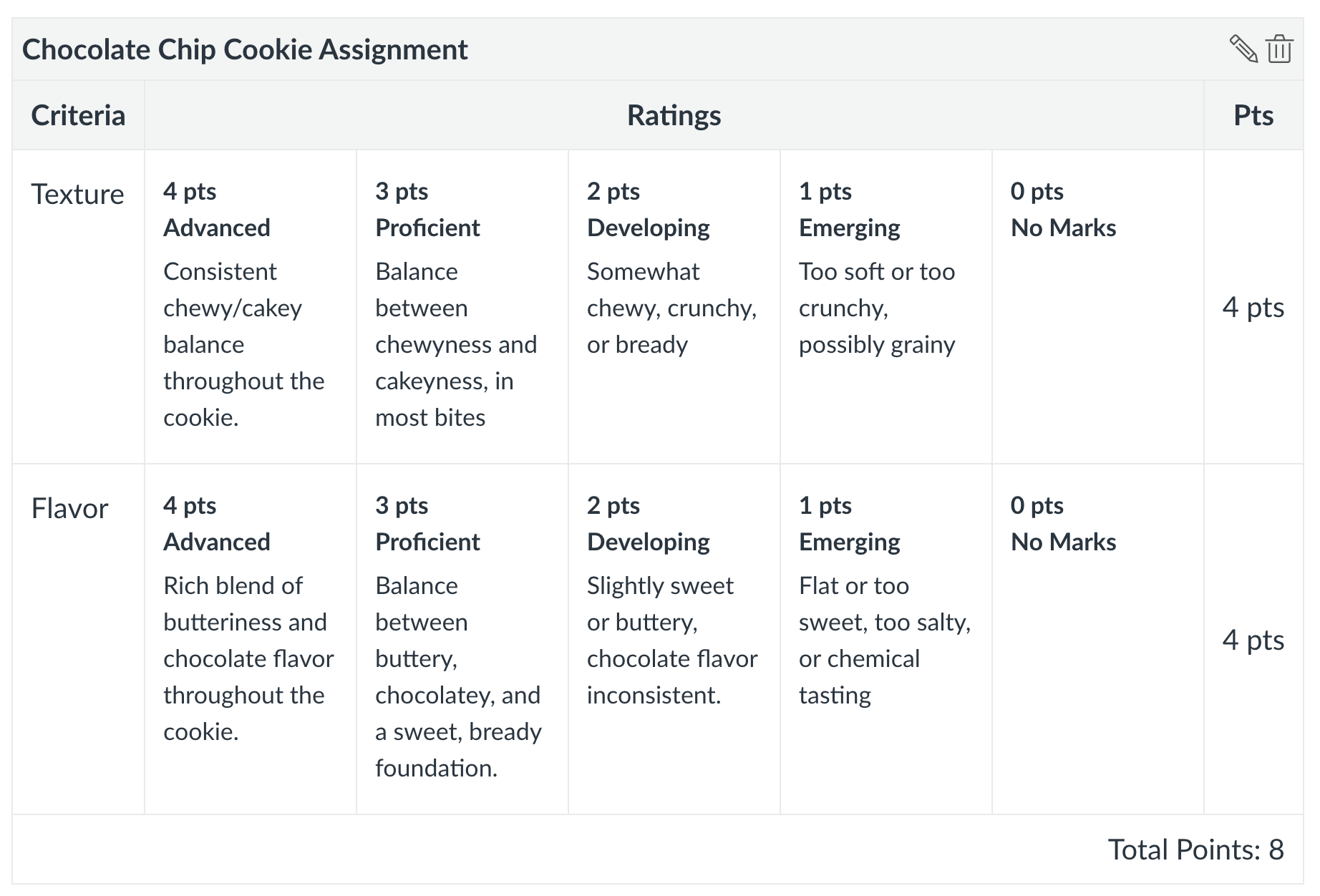

Rubrics can come in many shapes and sizes. Here is a simple example:

Evaluating a Chocolate Chip Cookie

What are the steps to creating a rubric?

- Identify 3-5 key learning outcomes for your assignment

- Transform each outcome into a rubric criterion

- Describe what meeting that criterion looks like (proficient level). Focus on observable behaviors and products, not effort or attitude

- Differentiate by describing higher and lower performance levels

- Review for bias, clarity, and alignment

- Test with a sample student work

- Revise based on use

How do I write effective rubric language?

Clear criteria language reduces follow-up questions, minimizes grade disputes, and enables students to self-assess their progress before submission. The difference between “shows improvement” and “integrates feedback to strengthen argument structure” can mean 30 minutes of additional explanation per student, or the difference between students understanding expectations and sending panicked emails at midnight.

Well-written criteria also protect against AI shortcuts by specifying the type of thinking required rather than the product delivered. When students understand exactly how their cognitive work will be evaluated, they invest in developing those skills rather than seeking workarounds.

The key to effective rubric language is specificity. Replace subjective judgments with observable evidence of thinking:

Transform Vague Language into Observable Actions

This process can be tricky because often we “know it when we see it,” which unfortunately doesn’t quite help students. Ideally, you want to move from subjective language (e.g. “good analysis” or “Creative solution”) to specific language (e.g. “Identifies underlying assumptions and evaluates their validity” or “Applies concepts in unexpected context with clear rationale”). This clarifies for the student what they should be looking for in their work.

It may also be useful to shift language from a deficit model (e.g. “Lacks evidence” or “Insufficient depth”) towards a more growth model (e.g. “Presents initial claim with emerging support” or “Begins to explore implications beyond surface observations”). In doing so, you can help the student understand that the skills are still in development rather than absent.

Build Progressive Skill Levels

Rather than just adding “more” at each level, describe qualitatively different thinking. For example:

| Emerging | Developing | Proficient | Advanced | |

| Critical Analysis | Describes what sources say about the topic | Questions assumptions within individual sources | Evaluates credibility across conflicting sources | Reimagines the debate by proposing alternative frameworks |

| Problem-Solving | Applies provided formula to similar problems | Selects appropriate method from available options | Adapts methods to fit novel contexts | Creates original approach when existing methods fall short |

Adapt Language to Discourage AI Shortcuts

A rubric is also a place where you can better redirect students away from inappropriate AI usage. For instance, you might replace “summarizes reading” with “Connects reading to Tuesday’s debate on methodology”. Rather than state “define key terms”, you can try “Distinguishes terms using examples from lab exercises”. You may be able to get more granular and specific in your students’ work by replacing “provide three examples’ with the expectation to “Selects examples that address peer’s counterargument”. You can use the rubric to move from generic tasks to course-specific requirements that make it harder (or at least more evident) for AI use.

Quick Diagnostic

If students still ask “what do you want?” your criteria might be too abstract.

Test each criterion:

- Could a student check their own draft against this?

- Does it describe something visible rather than inferred?

- Could two TAs identify this in student work independently?

If not, add specificity. “Demonstrates critical thinking” becomes “Questions authors’ assumptions using evidence from conflicting sources.”

Tips for success and pitfalls to avoid

Managing Rubric Complexity

Resist assessment creep. Limit rubrics to 4-6 criteria focused on core learning outcomes. If everything is important, nothing is.

Weight thoughtfully. Ensure point distribution reflects learning priorities, not ease of grading. If critical thinking matters most, it should carry the most weight, even if formatting is easier to assess.

Consider cognitive load. Students can typically focus on improving 2-3 areas simultaneously. Design rubrics that guide attention to what matters most for their development at this stage.

Transparency and Student Involvement

Share rubrics before assignment distribution. Students need time to understand expectations and plan their approach.

Decode your rubric in class. Walk through exemplars showing what each level looks like in practice. Abstract descriptions become concrete through examples.

Consider co-creation for major assignments. When students help develop criteria, they internalize expectations and take ownership of their learning. Even 15 minutes of class discussion about “what makes a strong analysis” improves performance.

Implementation and Revision

Track patterns after each use. If all students struggle with one criterion, the problem might be your assignment design or instruction, not their ability.

Revise iteratively. Your first rubric won’t be perfect. Note questions students ask, feedback you write repeatedly, and criteria that take excessive time to score. Adjust accordingly.

Build your rubric library but customize for context. A rubric that works for seniors won’t fit first-year students. Save templates but adapt criteria to each course’s specific needs and student population.

Plan for calibration if multiple graders are involved. Score sample papers together, discussing discrepancies until you reach 80% agreement.

Common Pitfalls to Avoid

Overlapping criteria: Each criterion should assess something distinct

Hidden criteria: Don’t grade on elements not in the rubric

Vague descriptors: “Somewhat effective” is made clearer as “Addresses 2-3 audience concerns”

Point unalignment: Ensure point distribution reflects learning priorities. Also, note that assigning numbers as performance level weights or even weighting the criteria are optional in rubric design, especially for mastery-based grading or ungrading approaches.

Static rubrics: Revise based on student performance patterns

Using AI tools to draft a rubric

AI can accelerate rubric drafting, but faculty expertise must drive the design. Use AI as a thought partner, not a replacement for pedagogical judgment.

| Appropriate uses | Never use AI to |

|

|

Effective Prompting Strategies

Recommended Starter Prompt:

“I teach [course name] at the [level] undergraduate level. I need help drafting rubric criteria for [assignment type]. My learning objectives are: [list 2-3].

Generate 4 observable criteria that assess these objectives. For each criterion, provide descriptions for three performance levels (emerging, proficient, advanced) using parallel structure. Focus on describing cognitive skills rather than products. Avoid terms like ‘good’ or ‘excellent.’ Each description should be specific enough that two graders would agree on the rating.

Important: These criteria should assess thinking processes that require engagement with course-specific content, not generic skills.”

Follow-up Refinement Prompts:

- “Revise these criteria to emphasize process over product”

- “Add discipline-specific language for a [field] course”

- “Check for potential cultural or linguistic bias”

- “Make the distinction between proficient and advanced more meaningful”

- “Suggest how to adapt this criterion to prevent AI completion”

- “Weight x criteria as 45% of the grade, y criteria as 30%, and z as 25%”

Quality Control Checklist

After AI generates draft criteria, evaluate:

- Does each criterion reflect your specific course content?

- Would students from diverse backgrounds understand expectations?

- Can you envision actual student work meeting these criteria?

- Do criteria overlap or assess the same skill twice?

- Is disciplinary thinking accurately represented?

Remember: AI doesn’t understand your students, institutional context, or disciplinary nuances. Every AI-generated criterion requires substantial customization.

Sample rubrics

Example 1: Analytic Rubric for Research-Based Argument (Extract)

| Criteria | Emerging (1) | Developing (2) | Proficient (3) | Advanced (4) |

| Thesis & Argument | States a position on the topic | Presents a clear thesis with basic reasoning | Develops a nuanced thesis with logical progression of ideas | Articulates sophisticated thesis; anticipates and addresses counterarguments |

| Evidence & Analysis | Includes some relevant sources | Integrates sources to support main points | Synthesizes multiple sources to build compelling evidence | Critically evaluates sources; identifies gaps or tensions in literature |

| Communication of Ideas | Ideas are present but may require effort to follow | Communicates main ideas clearly; minor issues don’t impede understanding | Expresses complex ideas clearly and effectively | Demonstrates eloquent expression of complex ideas with audience awareness |

Example 2: Single-Point Rubric for Oral Presentation

| Areas for Growth | Proficiency Criteria | Evidence of Exceeding |

| ← | Content Knowledge: Demonstrates comprehensive understanding of topic through accurate information and thoughtful responses to questions | → |

| ← | Organization: Presents information in logical sequence with clear introduction, body, and conclusion | → |

| ← | Engagement: Maintains audience attention through eye contact, vocal variety, and interactive elements | → |

| ← | Visual Support: Uses visuals that enhance understanding without overwhelming or distracting | → |

Example 3: Holistic Rubric for Reflection Paper

Level 4 – Exemplary: Demonstrates deep critical reflection connecting personal experience to course concepts. Analyzes assumptions and considers multiple perspectives. Identifies specific insights and articulates plans for applying learning. Ideas communicated with clarity and sophistication.

Level 3 – Proficient: Shows thoughtful reflection linking experience to course material. Questions some assumptions and shows awareness of different viewpoints. Identifies meaningful takeaways and suggests applications. Ideas clearly communicated.

Level 2 – Developing: Provides basic reflection with some connections to course concepts. Limited questioning of assumptions. Identifies general lessons learned. Communication is adequate though may lack depth or clarity in places.

Level 1 – Emerging: Offers surface-level description of experience with minimal connection to course material. Little evidence of critical thinking. Vague about takeaways or applications. Ideas may be difficult to follow or underdeveloped.

References

Bearman, M., & Ajjawi, R. (2023). Learning to work with the black box: Pedagogy for a world with artificial intelligence. British Journal of Educational Technology, 54(5), 1160-1173.

Brookhart, S. M. (2013). How to create and use rubrics for formative assessment and grading. ASCD.

Perkins, M., Furze, L., Roe, J., & MacVaugh, J. (2024). The artificial intelligence assessment scale (AIAS): A framework for ethical integration of generative AI in educational assessment. Journal of University Teaching & Learning Practice, 21(06).

Taylor, J., Kisby, C., & Reedy, A. (2024). Rubrics in higher education: An exploration of undergraduate students’ understanding and perspectives. Assessment & Evaluation in Higher Education, 49(6), 799-809.

Looking for more practical tips relating to rubrics? Check out rubric Practices in Action.